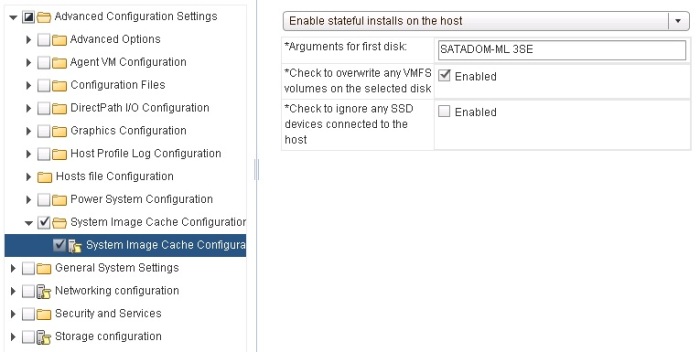

So I encountered an interesting problem the other week when upgrading a customer’s vROps environment from version 6.0.2 to 6.4. During the upgrade process, it appears as though the existing Python Action adapter instances were not automatically updated to the new vCenter adapters action setting, that changed in version 6.3.

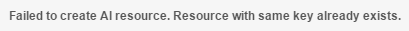

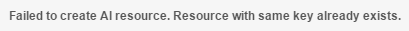

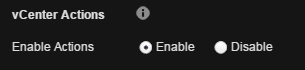

What I found is when trying to manually “Enable” Actions within the vCenter adapter (under Manage Solution) I would get the following error: “Failed to create AI resource. Resource with same key already exists.”:

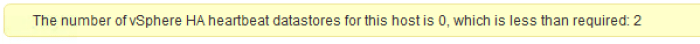

After reaching out to the team internally, I found that this is due to the original python actions adapter still existing even after the upgrades, so the solution was to manually remove it. As the python actions adapter was originally a Solutions adapter, and post-6.3 the “Python Actions Adapter” solution no longer exists, this meant to remove it I needed to use the REST API.

There is already a KB article detailing how to do this found here, however when attempting to run the curl command on the vROps master node appliance, it resulted in a strange error:

curl: (35) error:14077458:SSL routines:SSL23_GET_SERVER_HELLO:reason(1112)

After some searching I came across this post, which then pointed me to a curl bug (which in this case was with ubuntu) that seemed to be related to the issue I was having.

So I tried to run the same curl command with a more up to date version of curl (> 7.40) and it worked perfectly, and I was then able to remove the old python action adapter instances, following by then successfully selecting “Enable Actions”.

Hopefully this helps someone else!