So heaps has happened in my home lab over the past 18 months, where not only am I now running ESXi 6.7 U3, I also have an extra host with the same specs as the first, with both now acting in a 2-Node vSAN configuration.

As this is 2-Node vSAN, the witness appliance runs as a virtual machine on my HTPC, using VMware Workstation.

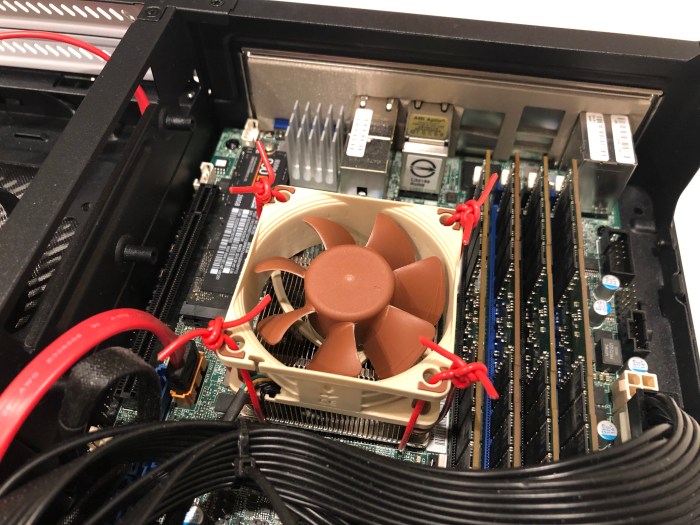

The insides of both hosts are almost-exactly the same as before, apart from an additional 1TB SSD in each (for a total of 4TB RAW storage), and an extra noctua fan in each for added cooling.

As the E200-8D motherboard has embedded 10GbE NICs, I am using a single, short CAT-7 cable to connect each host together for the direct-connect vSAN and vMotion traffic. Initially I did try using 2 cables for the direct-connect (as there are 2x10GbE nics per host) however this caused the temperature of the 10GbE network adapter to hover around 75-80c and with the temperatures this high, the performance of the nics was pretty poor. Using only a single nic dropped the temps down to about 60c, and as this is just a homelab, the load on the servers didn’t really justify the need for using both NICs.

To secure the Noctua NF A6x25 PWM CPU fan to the mobo (as it was previously just resting on the heatsink using the rubber screws) I found someone online was using spare wires to tie it down…which worked perfectly!

Unfortunately I can’t remember where I came across this idea…i’ll update this post with the link if I find it.

I’m pretty happy with the result, the lab runs cool, quiet, and doesn’t look too bad sitting in my lounge-room.

And with the added bonus of IPMI on the super-micro mobo’s, I can easily power on the hosts as needed to homelab and demo remotely.