For part 2 click here!

As many of you would already know, vSphere Replication 6.0 introduced the ability to isolate the replication traffic from all other traffic in your datacentre. For many security conscience organisations, this was a big deal…they can now ensure that replication traffic is not routed the wrong way.

This is fine for traffic between the source site and target, however what isn’t entirely clear is how to also isolate the NFC traffic between the vSphere Replication Server appliance and ESXi hosts.

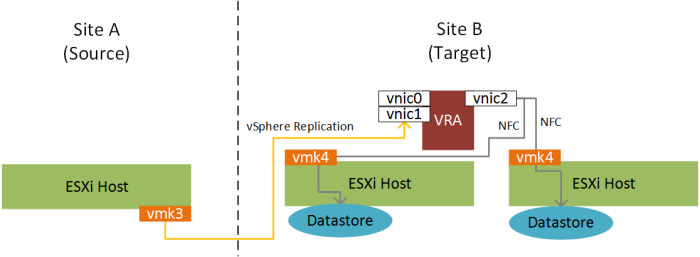

To recap, NFC (Network File Copy) is used to copy VM replication data from the vSphere Replication Server (VRS) appliance at the target site, to the destination datastores. Here’s a nasty diagram to illustrate:

Now, with vSphere Replication 6.x, we can create vmkernel interfaces on our ESXi hosts to ensure specific traffic (i.e. VR traffic, or NFC traffic) is isolated to specific networks. But how do we ensure that the NFC traffic is routed correctly from our VRS to the ESXi hosts? Well by default, the VRS consists of a single vnic, however you can add an additional vnic for incoming replication data (as documented here for VR 6.1). This works well for the incoming replication data, but what about the outgoing NFC traffic?

I reached out to some colleagues internally, and have been told that this can be accomplished with the addition of a third vnic to the VRS appliance, and a static route to the NFC tagged ESXi vmkernel port network. Another nasty diagram to illustrate:

Where:

- vmk3: vmkernel interface tagged for outgoing vSphere Replication traffic from the ESXi hosts.

- vmk4: vmkernel interface tagged for inbound NFC traffic to ESXi hosts

- vnic0: Used for management traffic (e.g. VRS -> VRM)

- vnic1: Used for inbound replication traffic to the VRA/S

- vnic2: Used for outbound NFC traffic from the VRA/S to ESXi hosts

(Note that other vmkernel interfaces/vSphere Replication appliances have been excluded from the diagram for simplicity).

got nfi what this means but it’s said very impressively so it must be good

LikeLike

LOL. I’m feeling weird because I know exactly what it means!

LikeLike

I’m running into the same situation, although the replication source and target are on the same network and I only have one vCenter appliance. If I try to segregate the replication traffic into a dedicated link it just stops working. Please let us know if your idea works and provide details of the configuration.

LikeLike

Will this work if I’m trying to replicate VM’s that are in 2 different datacenters but in the same VCenter?

LikeLike

Hi, yes that should work fine, you can have seperate vSphere Replication appliances under the same vCenter but different datacenters for replication between the 2 locations.

LikeLike

is it possible to do this with ESXi 5.5 and vSphere Replication 5.8? I think I can do a workaround to segregate vSphere replication traffic, but not sure how I could segregate the NFC traffic since the ability to tag NFC vmkernel doesn’t exist with ESXi 5.5.

LikeLike